Before we get carried away creating pods, services, deployments etc, let's spare a thought for security... (DevSecPenguinOps, here we come!). In the context of this recipe, security refers to safe-guarding your data from accidental loss, as well as malicious impact.

Now that we're playing in the deep end with Kubernetes, we'll need a Cloud-native backup solution...

It bears repeating though - don't be like Cameron. Backup your stuff.

This recipe employs a clever tool (miracle2k/k8s-snapshots), running inside your cluster, to trigger automated snapshots of your persistent volumes, using your cloud provider's APIs.

Geek-Fu required : 🐒🐒 (medium - minor adjustments may be required)

Preparation

Create RoleBinding (GKE only)

If you're running GKE, run the following to create a RoleBinding, allowing your user to grant rights-it-doesn't-currently-have to the service account responsible for creating the snapshots:

Why do we have to do this? Check this blog post for details

Apply RBAC

If your cluster is RBAC-enabled (it probably is), you'll need to create a ClusterRole and ClusterRoleBinding to allow k8s_snapshots to see your PVs and friends:

Confirm your pod is running and happy by running kubectl get pods -n kubec-system, and kubectl -n kube-system logs k8s-snapshots<tab-to-auto-complete>

Pick PVs to snapshot

k8s-snapshots relies on annotations to tell it how frequently to snapshot your PVs. A PV requires the backup.kubernetes.io/deltas annotation in order to be snapshotted.

From the k8s-snapshots README:

The generations are defined by a list of deltas formatted as ISO 8601 durations (this differs from tarsnapper). PT60S or PT1M means a minute, PT12H or P0.5D is half a day, P1W or P7D is a week. The number of backups in each generation is implied by it's and the parent generation's delta.

For example, given the deltas PT1H P1D P7D, the first generation will consist of 24 backups each one hour older than the previous (or the closest approximation possible given the available backups), the second generation of 7 backups each one day older than the previous, and backups older than 7 days will be discarded for good.

The most recent backup is always kept.

The first delta is the backup interval.

To add the annotation to an existing PV, run something like this:

To add the annotation to a new PV, add the following annotation to your PVC:

backup.kubernetes.io/deltas:PT1H P2D P30D P180D

Here's an example of the PVC for the UniFi recipe, which includes 7 daily snapshots of the PV:

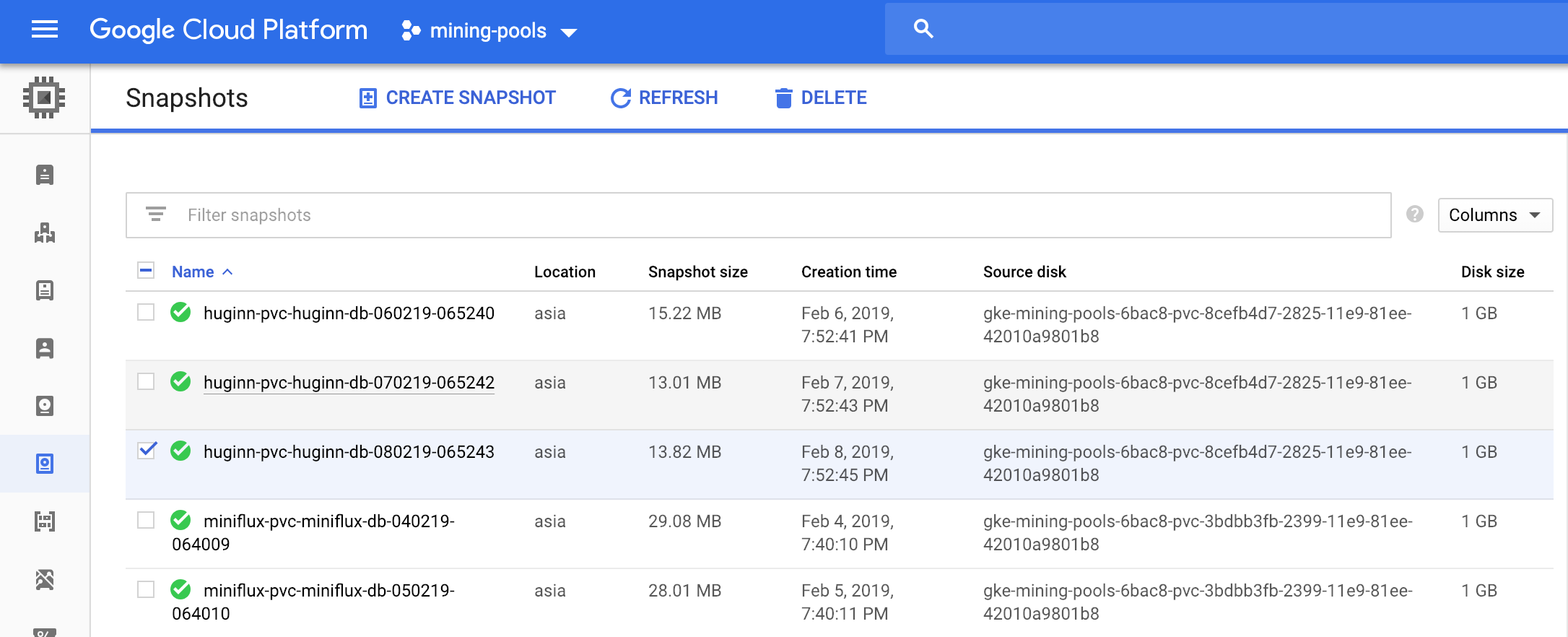

kind:PersistentVolumeClaimapiVersion:v1metadata:name:controller-volumeclaimnamespace:unifiannotations:backup.kubernetes.io/deltas:P1D P7Dspec:accessModes:-ReadWriteOnceresources:requests:storage:1Gi````And here's what my snapshot list looks like after a few days:{ loading=lazy }### Snapshot a non-Kubernetes volume (optional)If you're running traditional compute instances with your cloud provider (I do this for my poor man's load balancer), you might want to backup _these_ volumes as well.To do so, first create a custom resource, ```SnapshotRule```:````bashcat <<EOF | kubectl create -f -apiVersion:apiextensions.k8s.io/v1beta1kind:CustomResourceDefinitionmetadata:name:snapshotrules.k8s-snapshots.elsdoerfer.comspec:group:k8s-snapshots.elsdoerfer.comversion:v1scope:Namespacednames:plural:snapshotrulessingular:snapshotrulekind:SnapshotRuleshortNames:-srEOF````Then identify the volume ID of your volume, and create an appropriate ```SnapshotRule```:````bashcat <<EOF | kubectl apply -f -apiVersion:"k8s-snapshots.elsdoerfer.com/v1"kind:SnapshotRulemetadata:name:haproxy-badass-loadbalancerspec:deltas:P1D P7Dbackend:googledisk:name:haproxy2zone:australia-southeast1-aEOF

Did you receive excellent service? Want to compliment the chef? (..and support development of current and future recipes!) Sponsor me on Github / Ko-Fi / Patreon, or see the contribute page for more (free or paid) ways to say thank you! 👏

Employ your chef (engage) 🤝

Is this too much of a geeky PITA? Do you just want results, stat? I do this for a living - I'm a full-time Kubernetes contractor, providing consulting and engineering expertise to businesses needing short-term, short-notice support in the cloud-native space, including AWS/Azure/GKE, Kubernetes, CI/CD and automation.

Want to know now when this recipe gets updated, or when future recipes are added? Subscribe to the RSS feed, or leave your email address below, and we'll keep you updated.